DigiTIPS 2023 Program Details

On this page

2023 Confirmed Speakers

*As of MARCH 21; talk details and final schedule will be announced at least 2 weeks before each session. Speakers are still being added; those listed are confirmed.

Session 1: Updating and Developing Standards, Guidelines, and Specs

THURSDAY 23 FEBRUARY 10:00-15:00 New York time

International standards and guidelines are the framework around which cultural heritage organizations capture and share digital surrogates of their collections. What are those standards, and how are they changing? How are they being utilized by cultural heritage organizations? This session provides a look at current and emerging standards, as well as how they can be used by practitioners.

10:00-10:10

Introduction to DigiTIPS 2023 and Focus of Session 1, Series Chair and Program Organizer Hana Beckerle, Library of Congress

10:10-10:20

Why Standards?, Jeanine Nault

Why Standards?, Jeanine Nault, program officer, Smithsonian Institution

Abstract:

A brief look at why standards and guidelines are important for use in the cultural heritage community.

10:20-11:20

Updating Standards: Third Edition of the FADGI Technical Guidelines for Digitizing Cultural Heritage Materials,

Updating Standards: Third Edition of the FADGI Technical Guidelines for Digitizing Cultural Heritage Materials, Roger Triplett* and

Don Williams, CEO, Image Science Associates

Abstract:

Members of the FADGI Still Image Working Group provide an overview of the latest FADGI Technical Guidelines for Digitizing Cultural Heritage Materials. This presentation focuses on the revision process, changes to evaluation parameters and other best practices within the Guidelines, and next steps for increasing the utility of the Guidelines for the cultural heritage digitization community.Break

11:45-12:30

Developing Standards and Best Practices: Transmissive Materials,

Developing Standards and Best Practices: Transmissive Materials, Patrick Breen*, digital imaging specialist, and

Thomas Rieger, manager, digitization services, Digital Services Directorate, Library of Congress

Abstract:

What is the process for developing standards, guidelines, and documented best practices? This session will offer an overview of one approach, with a case study on developing standards for imaging transmissive materials. The presentation will provide an introduction of this area of research, including what spectral data is and why it’s important to use for imaging. This session will also present an overview of the technical process employed to gather data to derive the spectral nature of different media, and the potential applications for this data. This is an emerging area of study and the formal findings and applications for other cultural heritage imaging professionals have not been published, but this presentation will offer insights into how such research becomes formalized into standards.

12:30-13:00

Developing FADGI Standards for Imaged Audio, Hana Beckerle

Developing FADGI Standards for Imaged Audio, Hana Beckerle, digitial imaging specialist, Library of Congress

Abstract:

In 2023, the Library of Congress is beginning an exploratory project with partners from the Federal Agencies Digital Guidelines Initiative (FADGI) Audio/Visual and Still Image Working Groups to develop guidelines for imaged audio content. The output of imaged audio digitization processes is a complex package of content which may include high-resolution 2D or 3D image files of the audio carrier, primary and access audio files, log and data files about processing and system settings as well as other files. This project seeks to define, for the digital preservation community, standard output packages for imaged audio created by non-contact scanning. This presentation will provide a high-level overview of imaged audio technology and the process this working group will follow to develop guidelines and best practices for this emerging area of cultural heritage digitization.Break

13:30-14:15

"What are the Specs?” The Case for Standardized Digitization Project Specifications, Jim Studnicki

"What are the Specs?” The Case for Standardized Digitization Project Specifications, Jim Studnicki, president, Creekside Digital

Abstract:

For as long as we have been digitizing books, newspapers, photographs, boxed records, and other still image materials, the lack of standardization in project specifications has hampered efforts in creating consistent, quality digitized assets as well as vending out such services. While the FADGI guidelines have defined how master files should be created, they leave out other elements commonly encountered in digitization projects. In this presentation, Creekside Digital's Jim Studnicki explores what standardized, machine-readable digitization project specifications might look like and examines how they can increase value per dollar spent on digitization, reduce start-up time, mitigate the risk of a failed project, and ensure compliance the relevant Federal quality guidelines (specifically, FADGI and M-19-21) – all while encouraging reuse and reducing and sometimes eliminating barriers for non-expert personnel participating in multiple stages of these projects. Included also is a discussion of open sourcing / open publishing these specifications as well as a brief look at some real-life examples and actual working software.

14:15-15:00

Moderated Group Discussion with Presenters

Session 2: Program Development and Management

THURSDAY 23 MARCH 10:00-15:00 New York time

In addition to technical aspects of digitization, cultural heritage organizations must consider how to best grow, manage, and maintain their digitization programs. Standards and best practices can provide guidance on many aspects of program development and management, such as determining needs for your program, planning for staffing needs and equipment acquisition, scaling digitization operations up or down over time, ad responding to challenges and constraints. This session provides insights from organizations that have undergone significant growth and development or have successfully utilized standards and best practices for managing specific aspects of their programs.

10:00-10:05

Introduction to Session 2, Series Chair and Program Organizer Hana Beckerle, Library of Congress

10:05-10:50

Back to Basics: Implementing a FADGI-Compliant Workflow in Your Digitization Program, Matthew Breitbart

Back to Basics: Implementing a FADGI-Compliant Workflow in Your Digitization Program, Matthew Breitbart, digital imaging specialist, Library of Congress

Abstract:

An introduction for some and a refresher for others, this session provides digitization practitioners and program managers a step-by-step overview of what goes into successful digitization projects, and a checklist as a resource for the successful execution of digitization workflows. With a presentation broken down into: “Before, During, and After” sections, session participants learn the basics that are important for any digitization project, from planning stages through to file delivery.

10:50-11:20

Break

11:20-11:50

Three years in the Making: Lessons learned at the General Archives of Puerto Rico and its Digitization Center

Three years in the Making: Lessons learned at the General Archives of Puerto Rico and its Digitization Center,

Hilda T. Ayala González, General Archivist of Puerto Rico, and

Natalia Hernandez Mejías, project manager at the Digitization Center, Instituto de Cultura Puertorriqueña

Abstract:

In 2020 we began our journey to establish a digitization center at the General Archives. For the past three years we have had the opportunity to put into place a successful program that has function as the baseline for other digitization initiatives at the agency, but not without overcoming multiple challenges and adapting expectations on a daily basis. During this presentation we share our path and the lessons learned during these years from conceptualization to deliverables while trying to follow and implement standards and best practices.

11:50-12:20

All Hands on Deck at Duke University Libraries’ Digital Production Center, Giao Luong Baker

All Hands on Deck at Duke University Libraries’ Digital Production Center, Giao Luong Baker, digital production services manager, Duke University Libraries

Abstract:

This presentation showcases Duke University Libraries’ Digital Production Center (DPC) and its role in creating digital collections of rare and unique materials for the purposes of preservation, access, and publication. The talk contextualizes this discussion with an introduction to the staffing, space, and equipment of the DPC and showcases some notable digital collections in the Duke Digital Repository. Program development issues such as stakeholder engagement, determining priorities, and management before, during, and post-COVID response are explored.

12:20-12:45

There's a map for that! The growing pains and learning curves of digitizing a map library in Maine, David R. Neikirk

There's a map for that! The growing pains and learning curves of digitizing a map library in Maine, David R. Neikirk, digital imaging coordinator, Osher Map Library and Smith Center for Cartographic Education, University of Southern Maine

Abstract:

The Digital Imaging Center at the Osher Map Library (OML) is the only known dedicated imaging facility for a cartographic library in the United States. After a significant expansion in 2009, OML established its first digital imaging facility to digitize its vast collections of rare cartographic materials ranging from the 15th to the 21st century. This talk guides you from our naive beginnings with various workflows to adopting FADGI and finally finding our niche in customized digitization and sharing our digital collections with the support of OML's dedicated educational outreach team.

12:45-13:15

Break

13:15-13:55

Rights Management in a Digitization Program, Anne Young

Rights Management in a Digitization Program, Anne Young, director of legal affairs and intellectual property, Newfields

Abstract:

Rights managements and the dissemination of digital surrogates (and other Intellectual Property assets) maintained by cultural institutions is a key responsibility of caring for collections. How cultural institutions determine the rights status of collection objects is seemingly ever-changing with new technologies, evolving case law, applicable court decisions, and new legislation. This session provides concrete steps to rights management as it applies to digitization, access, and overall file management of digital surrogates.

13:55-14:15

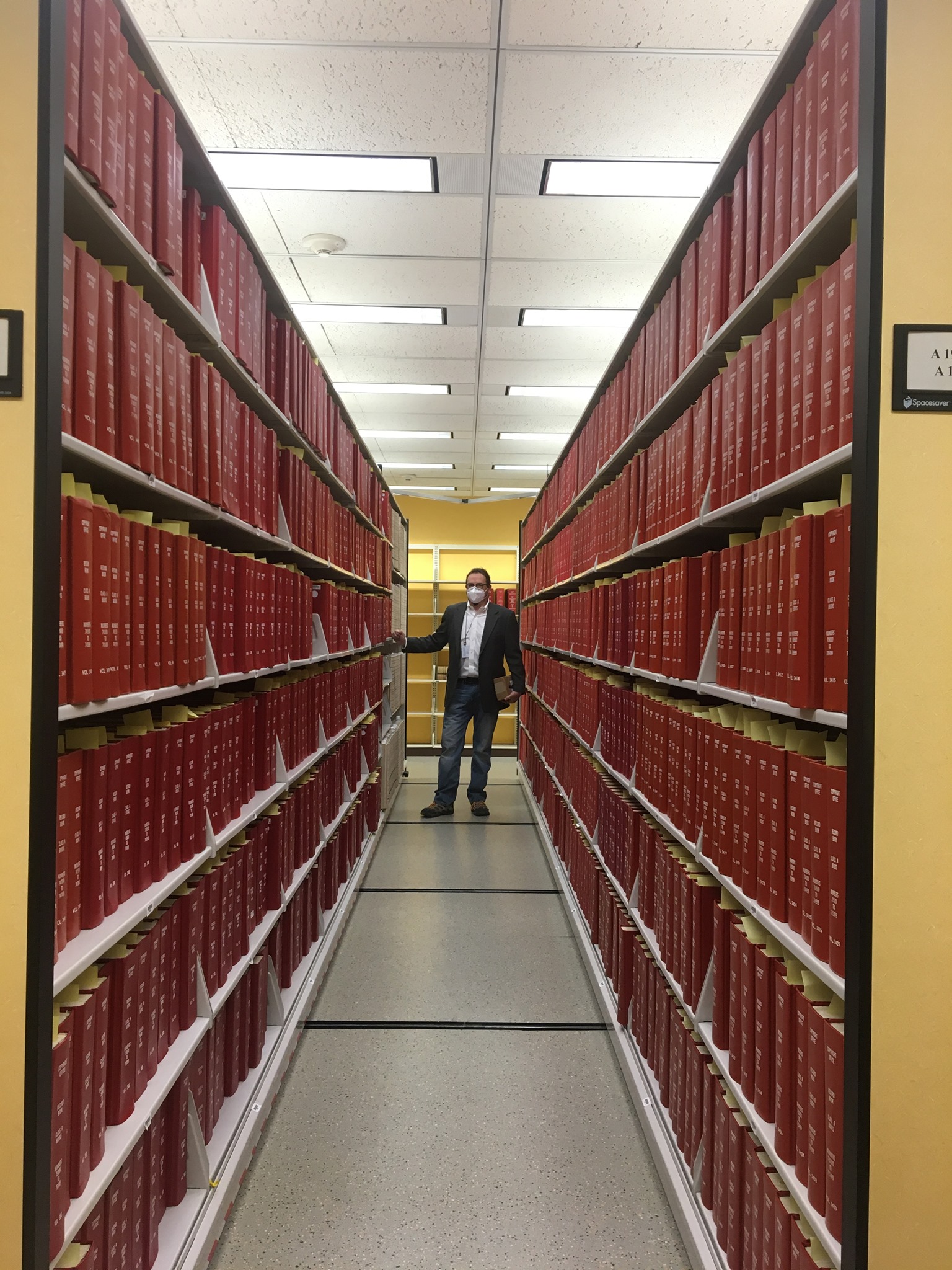

Enhancing the Digitization Program at the US Copyright Office, Kristin Phelps

Enhancing the Digitization Program at the US Copyright Office, Kristin Phelps, digitization manager, Office of Copyright Records, Copyright Office, Library of Congress

Abstract:

The Office of Copyright Records houses more than 26,278 bound volumes, including all official copyright records from 1870-1977, which have great value to researchers and the public. This session presents an overview of the evolution of the Office’s digitization program, including early roadblocks and challenges faced, and subsequent adjustments and improvements made. The presentation provides insights into managing a multi-year, high volume digitization project and the staffing, workflows, and imaging standards utilized.

14:15-15:00

Moderated Group Discussion with Presenters

Session 3: Image Quality

TUESDAY 25 APRIL 10:00-15:00 New York time

The concept of “image quality” may have different connotations depending on the context. Standards and guidelines can assist cultural heritage organizations in improving the technical aspects of their digitization processes in order to produce high quality images. Standards can also guide organizations in developing a quality assurance program at scale. This session provides insights from practitioners and organizations who are utilizing standards to maintain image quality from a technical perspective and at a program level.

10:00-10:05

Introduction to Session 3, Series Chair and Program Organizer Hana Beckerle, Library of Congress

10:05-10:45

Objective Imaging Performance Metrics, Peter D. Burns

Objective Imaging Performance Metrics, Peter D. Burns, president, Burns Digital Imaging LLC

Abstract:

In the past, imaging experts organized under the ISO have developed standardized approaches for evaluating digital camera and scanner imaging performance. Several of these have been adopted in imaging practice guidelines for cultural heritage communities. This talk discusses the use of these important objective measures of imaging performance that are part of these guidelines. These include measures for image resolution, noise, geometric distortion, and color capture.

10:45-11:15

Color Characterization: Overcoming Chart-based Limitations, Dietmar Wueller

Color Characterization: Overcoming Chart-based Limitations, Dietmar Wueller, CEO and owner, Image Engineering

Abstract:

Characterizing the color reproduction of digitization systems for cultural heritage have been based on color test charts for decades. This approach has caused a variety of problems. One of them is that the charts are usually not made of the same colorants as the originals that get digitized. Depending on how close the cameras sensitivity is to the human visual system this causes deviations that we have to deal with. Another problem is that for some types of originals there are simply no test charts available e.g. historic color negative or slide material but we still need a good reproduction. The ISO group that is working on the standards for this area is now developing a database that, in combination with spectral measurements of camera and lighting can and will replace the chart-based method in many cases and make color characterization more accurate.

11:15-11:45

Break

11:45-12:15

Image Quality and the Aspect of Visual Inspection and Automation

Image Quality and the Aspect of Visual Inspection and Automation,

Martina Hoffmann, head of digitization service, National Library of Switzerland

Abstract:

Using metrics, rules, standards, and workflows do help a lot in ensuring image quality, but that doesn't tell you all you need to know when you are looking to create images for preservation purposes. At least a few more aspects make a good image a good image. One aspect can be assessed by visual inspection; another goes in the direction of metadata—and making QA work at scale means you have to automate at least parts for your QC.

12:15-12:45

Item Driven Image Fidelity—Capturing the ‘Sweet Spot’

Item Driven Image Fidelity—Capturing the ‘Sweet Spot’,

Nathan Ian Anderson, imaging services team leader, Smithsonian Institution

Abstract:

In order to ensure that we’re achieving the optimal resolution for any Smithsonian managed digitization project, we go through a rigorous validation process we call Item Driven Image Fidelity (IDIF). The IDIF analysis results in a project-specific imaging standard by which we measure capture quality for all Smithsonian managed digitization projects. Our process ensures that fine specimen or item details are resolvable for remote researchers to do meaningful work with collections even if they are half a world away.

12:45-13:15

Break

13:15-14:00

Quality Control on the Fly in Digitization Projects (with live demonstration),

Quality Control on the Fly in Digitization Projects (with live demonstration), Volker Jansen, technical director and R&D manager, Zeutschel

Abstract:

During most digitization projects where quality standards are applied, image quality is checked in recurring sections. Usually, at the beginning and end of an imaging session or a project, images are checked for compliance with a given quality standard. Whether and how the quality has changed between these tests cannot always be recognized. This presentation describes a method to ensure that image quality can be checked and saved for each individual scan in a project. As a result, image quality is more predictable, more reliable, and repeat imaging is avoided. This presentation includes a demonstration using object-level targets to set up and run an automatic quality control step in line with an existing digitization workflow. The target measures every image against FADGI, Metamorfoze, or ISO quality specifications, without slowing down the productivity of the digitization project.

14:00-15:00

Moderated Group Discussion with Presenters

Session 4: Innovations and Special Projects

WEDNESDAY MAY 24 10:00-15:15 New York time

This session highlights organizations and practitioners using innovative or specialized methods for digitization, as well as those working with unique or unusual collections, or under special circumstances.

10:00-10:05

Introduction to Session 4

10:05-10:35

Digitizing the US National Herbarium, Sylvia Orli

Digitizing the US National Herbarium, Sylvia Orli, information management, Smithsonian National Museum of Natural History

Abstract:

The US National Herbarium is one of the largest herbaria in the world, totaling nearly 5 million plant specimens. Up until 2015, the herbarium was largely inaccessible to anyone but botanists who could come to the National Museum of Natural History to see the collection. In 2015, in partnership with the Smithsonian's Digitization Program Office, the Department of Botany embarked on an ambitious project to digitize 4 million pressed plants using a digitization conveyor belt. This presentation describes the 7-year project in detail, documenting the technologies, strategies and efficiencies used to keep up with the fast-moving and unrelenting imaging process.

10:35-11:00

Digitizing 135 Years of History at the National Geographic Society, Kendall Crumpler*, National Geographic

Abstract: In 2018, the National Geographic Society embarked on an endeavor to digitize all of its library and archival collections accumulated over its 135-year history. The assembled collections consist of approximately 12 million photographs, illustrations, and artwork, as well as thousands of artifacts and research grants. Unsurprisingly, undertaking such a colossal task from scratch with limited personnel and equipment has led to a few bumps and bruises, but the Digital Preservation team has learned a few tricks along the way that we’re keen to share with the cultural heritage community.

11:00-11:30

Break

11:30-12:00

Now in 3D: A Survey of 3D Imaging and Starting a 3D Digitization Program at the National Gallery

Now in 3D: A Survey of 3D Imaging and Starting a 3D Digitization Program at the National Gallery,

Kurt Heumiller, photographer / 3D program coordinator, National Gallery of Art

Abstract:

What do we mean when we say 3D? As the cultural heritage imaging community begins to adopt 3D imaging at an increasing pace, it important to have a base line understanding of some of the more common types of 3D data and how they might be used in different use cases. This session provides an introductory overview to some of the use cases, as well as some of the questions asked and challenges faced when starting a 3D digitization program.

12:00-12:30

Documenting Large-scale Diego Rivera Murals in San Francisco: Challenges and Solutions, Carla Schroer

Documenting Large-scale Diego Rivera Murals in San Francisco: Challenges and Solutions, Carla Schroer, founder and director, Cultural Heritage Imaging

Abstract:

This presentation shows the documentary products from two large fresco murals painted by Diego Rivera. One mural, painted in 1931, is 10.67 meters X 9.14 meters (35’ X 30’) and the other, painted in 1940, is 6.7 meters X 22.4 meters (22’ X 74’). Both frescos were documented using high-resolution photogrammetry to produce 3D models; one containing 5 billion 3D points and the second more than 8 billion points. We share our experience capturing the data, managing the large image sets (~1800 and ~2500 50-megapixel images respectively), and producing multi-gigapixel shape and color maps of the mural surfaces. Outputs of these projects include distortion and perspective corrected orthomosaic color 2D digital images, along with registered 2D Digital Elevation Models (DEMs) showing the surfaces’ shape. A DEM is a 2D false color image visualizing the 3D surface shape of the subject. In the DEMs it is possible to see Rivera’s brush strokes in the plaster, pentimento patches in the surface, giornata lines, and condition information such as cracks, abrasions, delamination, and flaking. A discussion of the public access and long-term archiving strategy, including the construction of the metadata record is included.

12:30-13:00

Break

13:00-13:10

CHANGE Project Case Studies: Program Overview, Jon Yngve Hardeberg

CHANGE Project Case Studies: Program Overview, Jon Yngve Hardeberg, professor, Norwegian University of Science and Technology

Abstract:

Cultural Heritage Analysis for a New Generation (CHANGE) is a European Union funded project led by the Norwegian University of Science and Technology that supports early stage researchers develop methodologies to assess and monitor cultural heritage artifacts. After a brief introduction to the program, participants discuss their specific projects.

13:10-13:30

CHANGE: Changes in Shape and Appearance on heritage surfaces: Let’s identify them!!, Sunita Saha

CHANGE: Changes in Shape and Appearance on heritage surfaces: Let’s identify them!!, Sunita Saha, doctoral student, Warsaw University of Technology

Abstract:

This work presents two novel methods for further post processing of surface data to identify location of changes and assess them over time or before and after conservation treatment on heritage objects. The first method classifies the geometry changes from the surfaces by studying the 4D local spatial distribution between two-time data and the second one assesses the changes in appearance by studying the behavior of the reflectance transformation imaging surface reconstruction coefficients. These methods help in making the process of comparison and assessment of 3D changes over time, automated improving the digital documentation.

13:30-13:50

CHANGE: Same, Same, but Different: Multiscale Approach to Condition Mapping and Imaging Using Spectral Techniques, Jan Cutajar

CHANGE: Same, Same, but Different: Multiscale Approach to Condition Mapping and Imaging Using Spectral Techniques, Jan Cutajar, doctoral student, University of Oslo, and

Silvia Russo, doctoral student, Haute École Neuchâtel Berne Jura

Abstract:

This presentation draws inspiration from the authors’ CHANGE-ITN research, building on the areas of overlap in their PhD work, challenges faced, and their findings. Overall, the talk addresses how the scale of artworks was approached in researching condition mapping and imaging, and how this dictated the methodologies they used. Jan employed imaging-based documentation and analysis for evaluating gel cleaning of unvarnished oil paints on canvas, using VNIR and SWIR hyperspectral imaging and μFTIR, amongst others, to study the monumental Aula paintings by Edvard Munch and related mock-ups. Silvia conducted analysis and assessment of metal soap formation on polychrome metal artworks through μFTIR, with preliminary attempts at using VNIR and SWIR hyperspectral imaging and IR thermography. The presentation explores potential applications of their findings to the world of cultural heritage preservation and imaging for conservation.

13:50-14:00

Stretch Break

14:00-14:30

Digital Content Provenance: The Content Authenticity Initiative, Santiago Lyon

Digital Content Provenance: The Content Authenticity Initiative, Santiago Lyon, Adobe

Abstract:

The CAI is an Adobe-led, cross-industry, open-source initiative with more than 1,200 members working to show the provenance, or origins, of digital files across formats (still images, video, audio, Gen AI, etc.). Using secure metadata called Content Credentials, we can see what happened to a file from the moment of creation, through editing, and onto publication. That information can be shared with the reader/viewer to help them better understand what they are looking at. This presentation discusses the conditions of the digital content landscape that led to the development of the CAI, current projects, and potential applications for the digital archiving world.

14:30-15:15

Moderated Group Discussion with Presenters

*BIOS for speakers without hyperlinks

Patrick Breen: Breen has worked as a digital imaging specialist at the Library of Congress since August 2022. He earned a BS in Imaging and Photo Technology from Rochester Institute of Technology (RIT). After graduation he worked at the Northeast Document Conservation Center (NEDCC) on digitizing historical documents, and specialized in working on oversized items, as well as printer profiling and reproductions. Following his time at NEDCC, was photographic technologist for the United States Air Force, acting as director of operations for the Film Processing Center (FPC). He has also worked as a film projectionist, and his interests include color science, large format item digitization, and film imaging.

Kendall Crumpler: Crumpler has served as digital imaging archivist in the Library & Archive at National Geographic Society since 2018. She previously worked as a contract digitization/museum technician at Smithsonian's National Museum of Natural History in the botany department on their mass digitization program. Prior to that she primarily worked as a field archaeologist throughou the Washington, DC, area. Kendall received her BAs in history and anthropology from Tulane University and an MA in classical art and archaeology from King's College London.

Volker Jansen: Jansen studied Photographic Engineering and earned a degree of “Diplom-Ingenieur” at TH Köln (Cologne University of Technology, Arts, Sciences). He was then responsible for research and development at Homrich Imaging Technik (HIT) based in Hamburgp. Since 2003, he has worked at Zeutschel GmbH and is responsible for research and development for all new products the company has developed since then. Jansen is one of the co-founders of the UTT Test Chart and the processes behind it (foundation of ISO standard 19262-19264). He’s a member of DIN NVBF 2 (Standards Committee Event Technology, Image and Film of the German Institute for Standardization (DIN)), and a member of ISO/TC42 JWG26 - Digitizing Cultural Heritage Materials, which is working on ISO standards 19262, 19263, and 19264, among others.

Roger Triplett: After obtaining a BS in imaging science from RIT and MS in Optical Engineering from University of Rochester, Triplett worked for 43 years at Xerox in image quality, color science, and image processing in R&D of numerous scanning and printing products. His final position at Xerox was principal engineer manager of the image quality and image processing team within the production printer group. He is currently a consultant for Image Science Associates where he supports the update of the FADGI standards.