Opportunities to Learn

On this page

Program

Archiving is pleased to partner with CHANGE—Cultural Heritage Analysis for New Generations—an EU-funded program led by NTNU staff. A number of the CHANGE Fellows submitted work to Archiving for review; work to be presented related to their CHANGE research is indicated in the program below.

PLEASE NOTE: Online registration is now open for the conference. See the Attend/Register tab.

Monday 19 June 2023

See short course program for classes happening during the day. Short courses take place at OsloMet, 2nd floor of Ellen Gleditschs hus, Pilestredet 35.

17:30 – 19:30

Join colleagues at the Museum of Cultural History, Frederiks gate 2, for a non-alcoholic aperitivo and snack. The museum, housed in a beautiful art nouveau building from 1902, opens to attendees at 17:30 to allow you time to explore some of their collections, specifically Vikingr: Viking Age and Fabulous Animals: From the Iron Age to the Vikings. Food and drink commences at 18:00.

Tuesday 20 June 2023

09:00 – 10:00

Session Chair: Sony George, NTNU (Norway)

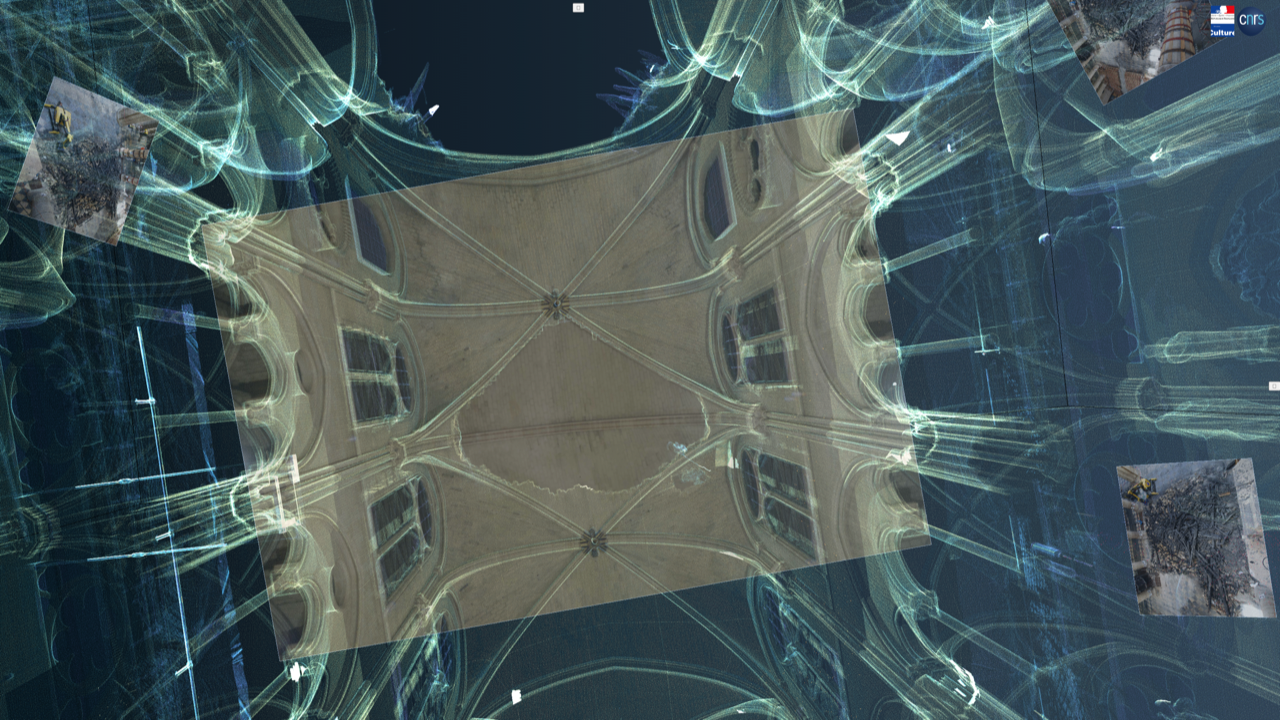

A Cathedral of N-dimensional Data and Multidisciplinary Knowledge in Heritage Science,

Livio De Luca, research director, CNRS, and director, MAP Laboratory (France)

Cultural heritage research makes the confrontation between material objects and multidisciplinary studies the arena for the production of collective knowledge. Integrating computational modelling with multidimensional digitization, our project benefits from the scientific framework for the restoration of Notre-Dame de Paris involving 175 researchers from various disciplines. The research aim is to shift the focus of digitization from physical objects to the knowledge surrounding them, to analyze the interdependence of complex morphological features and associated knowledge, and to experiment with innovative semantically-driven data production and analysis methods.

Cultural heritage research makes the confrontation between material objects and multidisciplinary studies the arena for the production of collective knowledge. Integrating computational modelling with multidimensional digitization, our project benefits from the scientific framework for the restoration of Notre-Dame de Paris involving 175 researchers from various disciplines. The research aim is to shift the focus of digitization from physical objects to the knowledge surrounding them, to analyze the interdependence of complex morphological features and associated knowledge, and to experiment with innovative semantically-driven data production and analysis methods.

10:00 – 12:30

Session Chair: Pedro Santos, Fraunhofer IGD (Germany)

10:00

In 2021, the Michael C. Rockefeller Wing (MCRW) in the Metropolitan Museum of Art, which focuses on the regions of Asia, Africa, and the Oceana, began the process of deinstalling its collection to start renovations on their galleries. The Imaging Department coordinated closely with the curatorial, collections and conservation teams to develop a comprehensive 2 and 3D imaging campaign. This paper centers around the unique challenges our team faced when asked to record, completely and accurately, four monumental New Guinea Ancestor (Bisj) Poles, carved out of the wood of mangrove trees by the Asmat people, on an extremely tight timeline. The decision to employ 3D digitization is always a difficult one because technology is constantly evolving and there are few standards for image quality metrics. This paper documents our team's approach to solving technical challenges.

10:20

A multimodal optomechatronics system is presented for measuring and monitoring change in cultural heritage objects exposed to environmental condition fluctuations or conservation treatments. It combines structured light, 3D colour digital image correlation and multispectral imaging, delivering information about an object's 3D shape, displacements, strains and reflectivity. The high functionality and applicability of the system are presented with the example of historical parchment subjected to changes in relative humidity.

10:40

Reflectance Transformation Imaging (RTI) is a technique that provides an enhanced visualization experience. The current acquisition methods for Reflectance Transformation Imaging (RTI) are time consuming and computationally expensive. This work investigates the idea of getting best light positions for RTI acquisition using surface topography. We propose automating the RTI acquisition by estimating the surface topography using deep learning method followed by estimating light positions using unsupervised clustering method. This is one shot method which only needs one image. We also created RTI Synthetic dataset in order to carry out experiments. We found that surface topography alone is not sufficient to estimate best light positions for RTI without putting constraints.

11:00 – 11:30

Morning Break / Exhibits Open / Posters Available for Viewing

11:30

Simplification of 3D meshes is a fundamental part of most 3D workflows, where the amount of data is reduced to be more manageable for a user. The unprocessed data includes a lot of redundancies and small errors that occur during a 3D acquisition process which can often safely be removed without jeopardizing is function. Several algorithmic approaches are being used across applications of 3D data, which bring with them their own benefits and drawbacks. There is for the moment no standardized algorithm for cultural heritage. This investigation will make a statistical evaluation of how geometric primitive shapes behave during different simplification approaches and evaluate what information might be lost in a HBIM (Heritage-Building-Information-Modeling) or change-monitoring process of cultural heritage if each of these are applied to more complex manifolds.

11:50

The problem of monitoring and tracking the changes that Cultural Heritage (CH) objects undergo is of high importance. A key task in this workflow is the initial registration of the data in a common reference frame. However, challenges arise when data to be aligned have been acquired in different timeframes (cross-time) and by different imaging techniques (multimodal). This paper addresses those challenges and proposes a pipeline for monitoring both the surface and the inner structure of CH objects. Taking as an input two different sets of 3D models and 3D Volumes acquired in different timeslots from 3D surface and CT scanning respectively, the pipeline registers both modalities in a multitemporal way. The results show the possibilities of this methodology for accurate multitemporal documentation of both surface and inner structure. This approach has the potential to facilitate the monitoring through time and change detection of CH objects in a more holistic way.

12:10

This paper addresses the concerns of the digital heritage field by setting out a series of recommendations for establishing a workflow for 3D objects, increasingly prevalent but still lacking a standardized process, in terms of long-term preservation and dissemination. We build our approach on interdisciplinary collaborations together with a comprehensive literature review. We provide a set of heuristics consisting of the following six components: data acquisition, data preservation, data description, data curation and processing, data dissemination, as well as data interoperability, analysis and exploration. Each component is supplemented by suggestions for standards and tools, which are either already common in 3D practices or represent a high potential component seeking consensus to formalize a 3D environment fit for the Humanities, such as efforts carried out by the International Image Interoperability Framework (IIIF). We then present a conceptual high-level 3D workflow which highly relies on standards adhering to the Linked Open Usable Data (LOUD) design principles.

12:30 – 12:50

Session Chair: Robert Kastler, MOMA (US)

Archiving 2023 Exhibitors provide brief overviews of their services/products.

12:50 - 14:00

LUNCH break on own

14:00 – 15:00

Session Chair: Laura Margaret Ramsey, The Image Centre, Toronto (Canada)

14:00

This paper presents the methodologies to extract the headline and illustrations from a historical newspaper for storytelling to support digital scholarship. It explored the ways in which new digital tools can facilitate the understanding of the newspaper content in the setting of time and space, "The Hongkong News" was selected from Hong Kong Early Tabloid Newspaper for the case study owing to its uniqueness in historical value towards the scholars. The proposed methodologies were evaluated in OCR (Optical Character Recognition) with scraping and Deep Learning Object Detection models. Two visualization products were developed to showcase the feasibility of our proposed methods to serve the storytelling purpose.

14:20

The presence of handwritten text and annotations combined with typewritten and machine-printed text in historical archival records make them visually complex, posing challenges for OCR systems in accurately transcribing their content. This paper is an extension of [1], reporting on improvements in the separation of handwritten text from machine-printed text (including typewriters), by the use of FCN-based models trained on datasets created from different data synthesis pipelines. Results show a significant increase of about 20% in the intrinsic evaluation on artificial test sets, and 8% improvement in the extrinsic evaluation on a subsequent OCR task on real archival documents.

14:40

This paper examines two new methodological approaches exploring Reflectance Transformation Imaging (RTI) data processing for detecting, documenting, and tracking surface changes. The first approach is unsupervised and applies per-pixel calculations on the raw image stack to extract information related to specific surface attributes (angular reflectance, micro-geometry). The second method proposes a supervised segmentation approach that, based on machine learning algorithms, uses coefficients of a fitting model to separate the surface’s characteristics and assign them to a class. Both methodologies were applied to monitor coating failure, in the form of filiform corrosion, on low carbon steel test samples, mimicking treated historical metal objects’ surfaces. The results demonstrate the feasibility of creating accurate cartographies that depict the surface characteristics and their location. Additionally, they provide a qualitative evaluation of corrosion progression that allows tracking and monitoring changes on challenging surfaces.

15:00 - 15:30

Session Chair: Roy S. Berns, Gray Sky Imaging (US)

An Overview of CHANGE: Cultural Heritage Analysis for New Generations provided by Jon Y. Hardeberg, NTNU (Norway)

15:30 - 16:00

Afternoon Break / Exhibits Open / Posters Available for Viewing

16:00 - 17:45

Session Chair: Ulla Bøgvad Kejser, Royal Danish Library (Denmark)

16:00

Beyond RGB is a free, opensource, software application providing colorimetric and spectral processing of a 6-channel spectral image. The software has an input of two sets of RAW RGB images, one set for each of two different lighting conditions. These sets include a dark current, flatfield, target, and item. The outputs are an RGB image that is color calibrated with data on the accuracy of the calibration and user-selected spectral reflectance estimations of regions of interest. The improvements created for this version of the software include an updated user interface, auto-sorting of files, improved color difference calculation and visualization, a userfriendly website, and the inclusion of various RAW file types.

16:20

The Pivan web platform is an open-source tool for managing different stages of automatic document processing, such as layout analysis, transcription, and named entity recognition. It allows for the visualization of document segmentation, transcription at the line or paragraph level, and annotation of named entities. Pivan's web-based nature makes it perfectly suited for collaborative annotation and offers a smooth experience, even for small machines or connections. It is based on up-to-date web technologies, it includes a comprehensive API, and it can be easily deployed via Docker.

16:40

Cultural-heritage imaging is a critical aspect of the efforts to preserve world treasures. This field is so demanding of color accuracy that the inherent limitations of RGB imaging can often be an issue. Various imaging systems of increasing complexity have been proposed, up to and including those that report full spectral reflectance for each pixel. These systems improve color accuracy, but their complexity and slow operational speed hamper their widespread use in this field. A simpler and faster bi-color lighting and dual-RGB processing system is proposed that improves the color accuracy of profiling and verification targets. The system can be used with any off-the-shelf RGB camera, including prosumer models.

17:00

Thanks to an Andrew W. Mellon Foundation grant, the General Archives of Puerto Rico started a mass digitization project in 2020. The goal was to establish a digitization center and implement FADGI guidelines. As the project developed and the volume of work grew, a fast and simple way to track the items through their different stages was needed. Although several software options were available, they required more resources than we had on hand at the time. Understanding our needs and goals, our team’s IT technician built an app tailored to the project’s requirements. In the past year, we have not only successfully kept track of the objects through the digitization workflow, but the app also proved effective for maintaining team communication, collecting technical metadata, and recording relationships between objects and their collections.

17:20

The Federal Agencies Digital Guidelines Initiative (FADGI) Technical Guidelines for Digitizing Cultural Heritage Materials: Third Edition was published in May 2023 as a comprehensive revision of the 2016 Technical Guidelines. The latest edition of the guidelines expands on earlier versions and incorporates new material reflecting advances in imaging science and cultural heritage digitization best practices. This paper presents an overview of the document history, the FADGI Still Image Working Group's motivations and approach for this revision project, the updates to the document, and future applications.

17:40

Closing remarks

Wednesday 21 June 2023

09:00 - 10:10

Session Chair: Jon Y. Hardeberg, NTNU (Norway)

Why Are We Surrounded by Edvard Munch’s Auala Commission?,

Tine Frøysaker, professor, University of Oslo (Norway)

Munch’s Aula Frieze is the only set of monumental Expressionist canvas paintings in Europe that are still preserved in situ. The 11 Aula paintings cover around 220-square meters. This vast commission was paid by subscriptions during the end of the first decade of the 20th century and the first years of WWI. There are at least two answers to the question why we are in this listed assembly hall of Oslo University. The first addresses why the EU-ITN project named CHANGE has its final session right here. The second discusses why the Munch Aula Project called MAP never seems to end. [Image: The Sun (1911, 4,44 m x 7,84 m. Woll no. 970) by Edvard Munch (1863-1944); photograph© Ove Kvavik/UiO, 2011.]

10:10 - 10:30

Session Chair: Hana Beckerle, Library of Congress (US)

A software package and PoC to help usability in various cases like quality issues and metadata collecting after digitization is introduced. Here usability means the improvement of the process using AI based automation as much as possible and providing easy-to-use interfaces for the end user. This is done with the help of existing open source tools.

Reflectance Transformation Imaging (RTI) is a non-invasive technique that enables the analysis of materials. Recent advancements in this technology, along with the availability of software for surface analysis through relighting, have improved the restoration and conservation of cultural heritage objects. However, there is a lack of appropriate benchmark data and reference light configurations, which makes it difficult to quantitatively compare and evaluate RTI data acquisitions. To address this, we have developed a dataset that can be used to assess the effectiveness of different surface light configurations for RTI acquisition. Additionally, we introduce methods to derive an ideal reference light configuration for a surface from its dense RTI acquisition. This dataset provides a standardized set of dense RTI acquisitions, accompanied by their corresponding reference light configurations that were obtained using our methods. This dataset can help researchers and developers to compare the performance of their approaches in solving the "Next Best Light Position" problem in RTI acquisition, which can ultimately improve the accuracy and efficiency of RTI acquisition and broaden its applicability in various fields.

The Digital Archives went in 2019 from being The National Archives of Norway’s own digital platform to become Norway’s joint national digital platform for receiving, preserving, and publishing digitized/media-converted historical archives. Regardless if you represent state, municipal, or private actors, small or large, the platform is free of charge and use for the Norwegian archive institutions. The digital platform was first published in 1998, marking 25 years in 2023.(

In 2017 the Swedish government launched a new Digital Strategy, with the overall goal for Sweden to be the best in the world in use of digitalization opportunities. Museums, archives and libraries are important organizations when it comes to fulfilling that goal. The interest for the museum collections is increasing and with that, the need to explore the collections increases. The Centre for Conservation of Cultural Property in Kiruna is a part of the national digitalization of Sweden’s cultural heritage. The department of Digitzation offers Sweden’s museums and archives digitization of a wide range of photographic material – glass negatives, slides and plastic film. Nordiska Museet is Sweden’s largest museum of cultural history and stories about the life and people of the Nordic region. It is home to over one and a half million exhibits. The collections reflect nordic lifestyle from the 16th century to the present day.

As digital collecting by museums, libraries, and archives has increased over recent years, the types and complexity of digital objects has also multiplied. The lessons learned and solutions created by the Digitization and Cataloging team at the Smithsonian’s National Museum of African American History and Culture (NMAAHC) in acquiring, processing, cataloging and preserving these new types of digital collections can assist others in identifying processes and workflows to preserve and make accessible the ever-expanding amount of digital collections that will grow into tomorrow’s digital cultural heritage.

The presentation showcases an innovative preservation project for engineering data based on the work of the E-ARK project. Together with Airbus, Piql engaged in developing an amended version of existing specifications towards a more industrial type, helping Airbus to become compliant with the eArchiving standards. We will highlight main findings from the project and present a path for other data owners of engineering data on how to overcome their preservation challenges. The paper presents a new and innovative preservation project of archiving complex engineering information packages based on the established best practices and standards in the E-ARK project.

The dynamic range that can be captured using traditional image capture devices is limited by their design. While an image sensor cannot capture the entire dynamic range in one exposure that the human eye can see, imaging techniques have been developed to help accomplish this. By incorporating high dynamic range imaging, the range of contrast captured is also increased, helping to improve color accuracy. Cultural heritage institutions face limitations when trying to capture color accurate reproductions of cultural heritage objects and materials. To mitigate this, a team of software engineers at RIT have developed a software application, BeyondRGB, to enable the colorimetric and spectral processing of six-channel spectral images. This work aims to incorporate high dynamic range imaging into the BeyondRGB computational pipeline to improve color accuracy further.

With the initiatives like Collections as Data and Computational Archival Science, archives are no longer seen as a static documentation of objects, but evolving sources of cultural and historical data. This work emphasizes the potential updates in preserving and documenting digital audiovisual (AV) content from a data perspective, considering the recent developments in natural language processing and computer vision tasks, as well as the emergence of interactive and embodied experiences and interfaces for innovatively accessing archival content. As part of Swiss national scientific fund Sinergia project, this work was able to work end-to-end with real-world AV archives like Télévision Suisse Romande (RTS). Resorting to an updated narrative model for mapping data that can be obtained from the content as well as the consumer, this work proposed an experimental attempt to build an ontology to formally sum up the potential new paradigm for preservation and accessibility from a data perspective for modern archives, in the hope for nurturing a digital and data-driven mind-set for archive practices.

10:30 – 11:05

Coffee Break / Exhibits Open / Posters Available for Viewing

11:05 – 12:45

Session Chair: Amy McCrory, The Ohio State University

11:05

The main objective of this research is to think about the cultural heritage of “Ningunismo” and its definitions through the preservation and cataloging of materials from different media, contained in a horizontal and communal archive with free online access. The focus is mainly on the hermeneutic conflict, originated after the death of the founders and how the mass media distorted its existence. The archive is composed of 500 items, 170 agents, and 30 places. The creation of a tree of elements helped to relate the different formats. From newspaper notes, to abandoned web pages, to papers at sociology conferences, any publication about or mentioning "Ningunismo" was included. Virtual material pertaining to disused web pages turned out to be the most numerous.

11:25

In recent years, awareness of the importance of safeguarding intangible cultural heritage (ICH) to protect humanity's cultural diversity has increased. However, much remains to be done to document and archive this heritage for future generations. This proposal outlines an implemented solution for the digital preservation of intangible cultural heritage. For the living culture of the Schnitzelbänke, a part of the UNESCO world cultural heritage, the Basler Fasnacht [1], we implemented an archive that functions simultaneously as an archive, digital news portal and basis for the documentation of future events. This living web archive combines digitization, registration, meta dating, contextualizing, and storytelling. It acts as a digital archive by providing documents of past times, as a broadcast by almost direct transmission during the event taking place and as a news platform by announcing venues, members and performers, because the same technological solution offers a flexible stage for all of these digital practices.

11:45

This paper presents a case study in developing an online portal aggregating the archives of Judy Chicago, a contemporary feminist artist, held in multiple institutions. The project represents a model for collaboration, iterative development, and improving access and discoverability for both feminist art archives and for collections at smaller institutions. The project partners are two academic libraries, two museums, two foundations, and the artist’s studio.

12:05

GLAM institutions have continuously digitised their analogue material since the beginning of the 21st century. Also, the number of digital repositories has grown, and the pressure for open or FAIR data has increased. However, most digital assets need more visibility and usage. This is particularly problematic because storage and continuous migration are cumbersome and expensive tasks. To improve this situation, we design a participatory web platform with tools to make annotating, contextualising, and organising images and their meta information easier. The project “Participatory Knowledge Practices in Analogue and Digital Image Archives” is developed in cooperation with the photo archives of the Swiss Folklore Society (SSFS, Basel, Switzerland). The Swiss National Science Foundation funds it from 2021 to 2025, and here, we present an intermediate project status. The interdisciplinary research team consists of scholars from the Bern Academy of the Arts, the Cultural Studies and European Ethnology, and the Digital Humanities Lab of the University of Basel. The PIA team (Participatory Image Archives) aims to make the platform available to researchers and the general public to conduct Citizen Science research.

12:25

National archives set and implement national policies, recommendations and legislation for archiving standards which governmental and public actors need to obey. However, smaller actors in the field do not have this obligation and often they do not possess the resources or know-how to follow the set guidelines or to implement their own digital preservation workflows. This easily leads to the utilization of commercial information management solutions, which might lead to a vendor lock-in situation. In the worst case scenario, the software is merely a CMS or ERMS without any adherence to digital preservation standards. The OneClick SIP creator presented in this paper responds to this challenge. As a very welcome side effect, it also makes it easier to disengage from vendor lock-in situations by simplifying the creation of compliant information packages.

12:45 - 14:00

Lunch on Own

14:00 - 15:20

Session Chair: Kurt Heumiller, National Gallery of Art (US)

14:00

A prevailing question among conservators and imaging professionals producing cultural heritage documentation and research is how to obtain an ultraviolet-induced visible fluorescence (luminescence) image that allow us to assess the quality of the filtration used and the environment in which the image is being captured. The literature on this topic generally recommends use of delicate and expensive control targets. This article describes a simple low-cost method to create and use a target that can aid in capturing images that are consistent and thus raising the confidence level of the images created.

14:20

Image sharpness is strongly dependent on lens aperture and camera position at capture. As high-end equipment is out of the reach of many museums, these choices are often mostly based on visual evaluations of image sharpness, which—though still possibly resulting in good quality images—is highly subjective and can lead to inconsistency. In the context of a broader effort to provide low-cost solutions for consistent high-quality museum photography, we propose a methodology for the characterization of the performance of a lens in terms of sharpness that enables the selection of the appropriate lens aperture and camera position for the capture of a sharp image of an object without the need for expensive equipment.

14:40

Imaging sensors are linear over a large part of their operational range. Nevertheless, their behavior becomes non-linear when approaching saturation. This is undesired if such sensors are used for scientific measurements. In this work, a simple and efficient off-chip method is proposed for image sensor linearization. First, the sensor response is characterized with a constant irradiance and a sequence of captures at several integration times. Then a 1D look-up table is calculated to compensate for the nonlinear range. This LUT can be applied to the raw sensor data before further postprocessing. The higher signal-to-noise ratio of captured data is used to demonstrate the benefit of the extended linear range. The proposed method can restore linearity while being easy to implement and computationally efficient.

15:00

Color targets come in different designs, sizes and surface finishes. A high quality color target such as the Next Generation Target (NGT)1, designed for the Library of Congress, has a glossy finish that makes it sensitive to the light-setup geometry. When the NGT color target is to be captured orthogonally, i.e. both the camera and the light share the same plane and lie on the normal of the target’s surface, even with cross-polarization in place it is not possible to completely eliminate the high reflections caused by the camera/light geometry – unlike for less glossy color targets such as the X-Rite SG CC- not even if the camera/light setup were to be tilted at different angles. We are demonstrating in this paper that it is possible, however, to deploy a mosaic approach to capture the NGT color target at a tilted angle, masking out the reflections, and composing a rectified mosaic image out of only the clear parts of the target. The resultant ICC color correction profile for the mosaic image is proved to be viable to put in use and it satisfies all the necessary metrics for ISO level ”A” when it comes to color calibration and color accuracy.

19:30 – 22:30

After a tour or exploring Oslo, join colleagues at the conference reception. Location details provided to register attendees prior to the event.

Thursday 22 June 2023

9:00 – 10:00

Session Chair: Robert Kastler, MOMA (US)

From Smells of Collections to Collections of Smells,

Matija Strlic

Matija Strlic, heritage scientist, Heritage Science Lab, University of Ljubljana (Slovenia)

The vast majority of objects in collections are only ever appreciated visually. Yet, archival and library visitors often refer to the smell of paper as one of the major sources of enjoyment: the heady mix of aromas of hay, bitter almonds, and vanilla is universally appreciated. While perfumes themselves are perhaps the most evident objects that are meant to be enjoyed olfactorily, the smell of old books is a consequence of their active chemical degradation. Yet other smells are associated with object use or are perhaps the result of conservation treatments. One aspect is certain: olfactory experiences are valuable to the public and make learning experiences more memorable. We have only recently started developing clearer guidelines for introduction of smells into exhibitions where a variety of issues need to be considered, from conservation to health-related. Similarly, guidelines are being developed on how to preserve smells in their own right: it is not just the chemical composition that needs to be archived but also the broader social and historical significance of a smell, as this can change through time as well. The recent establishment of archives of perfumes and smells is a testament to the fact that olfactory engagement is here to stay.

10:00 – 10:15

Session Chair: Hana Beckerle, Library of Congress (US)

Archaeological textiles are often highly fragmented, and solving a puzzle is needed to recover the original composition and respective motifs. The lack of ground truth and unknown number of the original artworks that the fragments come from complicate this process. We clustered the RGB images of the Viking Age Oseberg Tapestry based on their texture features. Classical texture descriptors as well as modern deep learning were used to construct a texture feature vector that was subsequently fed to the clustering algorithm. We anticipated that the clustering outcome would give indications to the number of original artworks. While the two clusters of different textures emerged, this finding needs to be taken with care due to a broad range of limitations and lessons learned.

Reflectance Transformation Imaging (RTI) is a multi-light imaging technique using a camera on a fixed position and orthogonal to the studied surface, while varying the light position for each image captured. This allows for the reconstruction of a surface's visual appearance and the characterization of the surface by providing additional information on surface deformations and local micro-geometry. RTI was applied on historical model glass corroded in the presence of volatile organic compounds (VOCs) to visualize early stages of corrosion. RTI was used to create relighting visualizations and generate maps based on statistical descriptors derived from the local reflectance distribution of the pixels. Selected maps were able to assist in the quantification of corrosion signs i.e., fine cracks and salt neocrystallizations (SN), on a more global scale as compared to digital microscopy (DM). Therefore, RTI could provide an imaging solution for the characterization of corrosion signs on transparent colourless glass surfaces, which could not be visualized using simple RGB photography neither with transmitted nor reflected light.

In this paper, the appearance of gilded surfaces is measured and modelled, to guide conservation and restoration. Three types of gilding are studied, water gilding, oil gilding, and “imitation” gilding. The three materials present an appearance related to their manufacturing method. An imaging based acquisition system is used to measure the bidirectional reflectance of the materials and its BRDF is modelled. Using perceptual metrics, the appearance of the three different materials is analyzed and used to guide conservation and restoration treatments.

Among people working with cultural heritage objects, the ability to make the best possible copies has always been one of the important topics. In recent years, the development of techniques that make it possible to make three-dimensional documentation of heritage objects and the capabilities of software that controls cutter machines have made it possible to make material copies in wood, among other things. Unfortunately, the lack of standards for the creation of three-dimensional documentation, as well as the relatively unique nature of this type of release, translate into a lack of common understanding of the real possibilities and limitations of these technologies. What is needed is an analysis of the path of execution of this type of project, which would discuss the planned exhibition or educational goals, the assumed technological parameters achieved in the course of implementation and an evaluation of the results achieved. The accumulation of such results will not only help facilitate the implementation of future projects, but will also be the first step towards the creation of standards and quality norms for this type of product.

Handwriting significantly contributes to the task of the writer identification and verification of modern and historical documents. This work developed a writer verification system for ancient Hebrew square-script manuscripts, mainly based on the edge-direction feature. Two configurations within the proposed system are carried out, i.e., character-based edge-direction feature extraction and extraction techniques of handwriting shape representation that may drive the system performance. A classification-based verification approach, utilizing Support Vector Machine (SVM) as the classifier, is employed to evaluate the performance of the two configurations. This study has confirmed that the skeleton-based shape representation technique outperforms the edge detection technique used in the predecessor approach. Furthermore, a character-based writer verification system provides the corresponding scholars and experts with an alphabetical investigation to identify the uniqueness of each writer’s handwriting.

10:15 – 11:00

Session Chair: Fenella France, Library of Congress (US)

10:15

Multiscale and multimodal strategies and systems for change capture and tracking of CH assets, Alamin Mansouri, Université de Bourgogne Franche-Comté (France)

10:30

Computational methods for change studying (characterization, visualisation, and monitoring) of CH assets, Robert Sitnik, Warsaw University of Technology (Poland)

10:45

Application: Change during the alteration and conservation of CH artefacts , Clotilde Boust, Centre for Research and Restoration of the Museums of France (France)

11:00 – 12:15

Join the interactive paper authors in the Aula foyer to discuss their work. Coffee/tea available in the Aula courtyard.

12:15 – 12:55

Session Chair: Carolina Gustafsson, Stiftelsen Föremålsvård i Kiruna (Sweden)

12:15

One of the continued challenges for preservation resources is the demand for objective data to make informed retention and withdrawal decisions. Discussions within the shared print and print repository communities have circled around the integral question pertaining to the selection process of books to be incorporated into a national shared print system, namely the minimum number of copies such a system must maintain. The challenge has been knowing the condition of those volumes that are withdrawn or retained, since all decisions have been based solely upon a shared catalog where partners do not have data to know the condition of others’ volumes. This conundrum led to a national research initiative funded by the Mellon Foundation “Assessing the Physical Condition of the National Collection” to create a baseline of understanding of the actual condition of the national collection in research libraries and collections. The project undertook an extensive assessment capturing data from 500 “identical” volumes each from 5 different research libraries and analyzing the dataset to answer the following questions: What is the general condition of library collections in the 1840-1940 period? Can the condition of collections be predicted by catalog or physical parameters? What collection assessment tools help determine a book’s life expectancy? Filling the gaps in knowledge for understanding the physicality of our collections is helping us identify at-risk collections and explain the high percentage of dis-similar “same” volumes due to the impact of paper composition. Predictive modelling and simple assessment tools allow more accurate prediction of good and poor-quality copies of books, as well as what is typical and atypical for specific decades.

12:35

As complementary technologies evolve, data compression continues to be a foundational aspect of growing digital collections. In this study, selected lossless still image codecs from 1992 through 2022 were benchmarked across a variety of efficiency and performance measures using reference images from cultural heritage. Additionally, entropy estimates were calculated by source image to assist in characterizing image information and evaluating encoder efficiency against assessed feasible compression limits. Encoder designs and compression techniques were also examined in the context of the study's measured results.

Lunch Break on own

12:55 – 14:00

14:00 – 15:00

Session Chair: Michael J. Bennett, University of Connecticut (US)

14:00

The Smithsonian Institution Digitization Program Office’s Collection Digitization team develops and designs a “three-pronged” workflow approach to mass digitization of museum collections, called the Physical, Imaging, and Virtual Workflows. This approach addresses proper handling of objects, optimizing capture throughputs, and streamlines the processing and delivery of images through automation. The Physical Workflow Design defines the production space and safe movement of objects from storage to the digitization production space; the Imaging Workflow Design defines the technical specifications, file deliverables, and the results of our ‘Item Driven Image Fidelity’ (IDIF) testing; and finally, the Virtual Workflow Design defines the lifecycle of the digital file, from creation to online access, describing the various data processes required for success.

14:20

While we now have mature, proven guidelines (FADGI) which provide solid recommendations on how to create proper master files, beyond targets and the ability to measure them, the cultural heritage community lacks easily consumable, flexible specifications for conducting actual projects. Moreover, there is a general lack of examples of FADGI-compliant Statements of Work, leading to much re-invention of the wheel and even to library and archival personnel deciding to not use FADGI at all. This puts inexperienced users at a decided disadvantage and creates a formidable barrier to entry for new practitioners who want to use the FADGI guidelines on their projects. As discussed in this paper, a DIO (or Digitization Information Object) is a data model encompassing all technical parameters of a still image digitization project. At its core, the DIO schema is intrinsically tied to FADGI, and enforces FADGI compliance through its use. It provides a common, machine-readable instruction set for digitization-facing software programs. This allows consuming applications to be quickly and precisely configured per-project to specify output image parameters, configure post-processing workflows, verify both working files and huge batches of completed content at scale, and even to provide plain-English text for a project's Statement of Work -- all from the same DIO JSON file.

14:40

Digitization is a key process in preserving, protecting and providing continued long-term access to archive materials. The competencies required in digitization pose challenges to individuals and organizations engaging in digitization activities. We take a competency mapping approach to support digitization skills anticipation. Current and anticipated digitization competencies in public and private organizations in Finland were surveyed using a digital preservation competency framework. Results show that organizations see digitization requiring a wide set of competencies ranging from policies and legal issues to practical organization and technical delivery of digitization. There is anticipated need to optimize organizational and service capabilities in near future. The desired target state is quite advanced overall, regardless of current capabilities. The largest self-identified competency gaps exist in strategical and technical approaches to digital archiving and digital access to archives. Our results inform continued professional development and planning of future digitization efforts.

15:00 – 17:35

Session Chair: Yosi Pozeilov, Los Angeles County Museum of Art (US)

15:00

Some cultural heritage collections such as manuscripts, scrolls, books, sheet and folia that are faded, damaged, or otherwise unreadable present challenges for curators, collections professionals, scholars, and researchers looking to understand collections more fully. Seeking to uncover distinct features of objects, they have employed modern imaging tools, including sensors, lenses, and illumination sources and thus positioned multispectral imaging as a critical method for cultural heritage imaging. However, cost and ease-of-use have been prohibiting factors. To address this, Rochester Institute of Technology received a grant from the National Endowment for the Humanities (PR-268783-20) to fund an interdisciplinary collaboration to develop a low-cost, portable imaging system with processing software that could be utilized by scholars accessing collections in library, archive, and museum settings, as well as staff working within these institutions. This article addresses our open source and extensible software applications, from the first iteration of software in 2020 to our current effort in 2022-23 which seeks to simplify both processes. An overview of the image capture and processing software to capture and visualize the spectral data offers a basis for demonstrating the possibilities for low-cost, low barrier-to-entry software on cultural heritage imaging, research, preservation, and dissemination.

15:20

A practical workflow to capture and process hyperspectral images in combined VNIR-SWIR ranges is presented and discussed. The pipeline demonstration is intended to increase the visibility of the possibilities that advanced hyperspectral imaging techniques can bring to the study of archaeological textiles. Emphasis is placed on the fusion of data from two hyperspectral devices. Every aspect of the pipeline is analyzed, from the practical and optimal implementation of the imaging setup to the choices and decisions that can be made during the data processing steps. The workflow is demonstrated on an archaeological textile belonging to the Paracas Culture (Peru, 200 BC - 100 AD ca.) and displays an example in which an inappropriate selection of the processing steps can lead to a misinterpretation of the hyperspectral data.

15:40 – 16:10

Afternoon Break

Join other attendees in the courtyard for a final beverage.

16:10

The contribution discusses a range of evaluation methods, based on (spectral) imaging techniques, for providing more reliable and reproducible appraisals of cleaning studies when compared to the subjective user-dependant scores that are currently used in the conservation profession. Specifically, novel agar spray cleaning tests on exposed ground and unvarnished oil paint mock-ups are reported as a case study to demonstrate cleaning efficacy and homogeneity metrics in practice, showcasing novel options that could be adapted for use within the conservation community.

16:30

Three endmember extraction methods (NFINDR, NMF and manual extraction) are compared in two stages (pre- and post- intervention) of the same painting, a Maternity on copper plate, under study for the formulation of a hypothesis on the authorship and the dating. The endmembers are extracted from spectral images in the 400-1000 nm range. The main aim is to determine if simple automatic endmember extraction is enough for pigment and re-painted areas identification in this case study.

16:50

Transparent glass frames are often used to exhibit, handle, and store ancient manuscripts (folia or fragments) across museums, libraries, and collections. Once the manuscripts are carefully sealed (glazed), the process of re-opening the frame for the analysis of the glazed manuscript is not always desirable, given their fragile state of preservation. Therefore, microimaging with IR and UV light sources above the glass frame is a frequently used method for the preliminary (qualitative) classification of the inks applied on the manuscripts. Building on this well-established methodology, this study explores the potential of spectral imaging technology for the quantitative analysis of glazed manuscripts. The present research focuses on the colorimetric analysis of iron-gall and carbon black inks applied on a papyrus substrate, aiming to the quantitative analysis of the effect of glass frames to the acquired images. The obtained results show that the quantitative colorimetric analysis of the inks above the glass frame can be used for the preliminary classification of the inks, hence minimizing the need to open the glass frames for further analysis.

17:10

Hyperspectral imaging techniques are widely used in cultural heritage for documentation and material analysis. A pigment classification of an artwork is an essential task. Several algorithms have been used for hyperspectral data classification, which are more appropriate than each other, depending on the application domain. However, very few have been applied for pigment classification tasks in the cultural heritage domain. Most of these algorithms work effectively for spectral shape differences and might not perform well for spectra having a difference in magnitude or for spectra that are nearly similar in shape but might belong to two different pigments. In this work, we evaluate the performance of different supervised-based algorithms and some machine learning models for the pigment classification of a mockup using hyperspectral imaging. The result obtained shows the importance of choosing appropriate algorithms for pigment classification.

17:30 – 17:35

Closing remarks

Friday 23 June 2023: CHANGE Program

Please Note: The CHANGE Program will take place at the National Museum, Brynjulf Bulls plass 3, 0250 Oslo. Registration is free, but required. Register for the CHANGE Program. Deadline June 16.

9:00 - 9:20

Welcome and CHANGE Overview, Sony George, Norwegian University of Science and Technology (Norway)

9:20 – 10:30

MULITISCALE AND MULTIMODAL STRATEGIES AND SYSTEMS FOR CHANGE CAPTURE AND TRACKING OF CH ASSETS

Imaging techniques for change documentation and monitoring of stained-glass windows, Agnese Babini, Norwegian University of Science and Technology (Norway)

Quality evaluation in CH digitization, Dipendra Mandal, Norwegian University of Science and Technology (Norway)

Portable multimodal system for CH surface measurement and monitoring, Athanasia Papanikolaou, Warsaw University of Technology (Poland)

Microscopic 3D imaging and conservation, Yoko Arteaga, Centre of Research and Restoration of the Museums of France (France)

COMPUTATIONAL METHODS CHANGE STUDYING (CHARACTERIZATION, VISUALIZATION, AND MONITORING)

Registration techniques for differential and multimodal data, Evdokia Saiti, Norwegian University of Science and Technology (Norway)

Analysis and visualization of multi modal image data in CH surfaces monitoring, Sunita Saha, Warsaw University of Technology (Poland)

Capture and characterization of change in the appearance of CH objects surface, Ramamoorthy Luxman, Université de Bourgogne Franche-Comté (France)

Appearance change assessment: Link between local geometry and global appearance descriptors, David Lewis, Université de Bourgogne Franche-Comté (France)

APPLICATIONS: CHANGE DURING THE ALTERATION AND CONSERVATION OF ARTEFACTS

Imaging-based documentation and analysis for change monitoring of novel dry-cleaning restoration/ conservation methods for unvarnished canvas paintings, Jan Cutajar, University of Oslo (Norway)

Analysis and assessment of degradation of polychrome metal artworks, Laura Brambilla, HES-SO University of Applied Sciences and Arts Western Switzerland (Switzerland)

Analysis and monitoring of degradation of historical glasses, Deepshikha Sharma, Swiss National Museum (Switzerland)

Low-budget portable device for technical imaging of cultural heritage artifacts, Alessandra Marrocchesi, University of Amsterdam (the Netherlands)

Characterization of surface change of historical metals by employing imaging and computer vision, Amalia Siatou, HES-SO University of Applied Sciences and Arts Western Switzerland (Switzerland) and Université de Bourgogne Franche-Comté (France)

Explore the posters and talk with the Early Stage Researchers on their presented projects.

10:30 – 11:00

11:00 – 12:00

11:00

Digital color reconstructions of cultural heritage using color-managed imaging and small-aperture spectrophotometry Roy S. Berns, Gray Sky Imaging (US)

11:30

Telling Humanity’s Story: Democratizing Technology to Empower Cultural Narratives, Mark Mudge, Cultural Heritage Imaging (US)

12:00 – 13:00

GROUP LUNCH

13:00 – 14:00